The Artificial General Intelligence (AGI) debate continues. Those who waste their time 😄 reading my posts, know that I opine that it will not happen in our lifetime. Why don’t I say that it can never happen?

The most important thing is to understand that there is no standardized and scientific definition of AGI. The confusion, that leads many to believe that AGI can be achieved, is that they measure intelligence in a very quantitative way. It is our this very thought process, since decades, that has now brought us to the cusp of millions of number crunching jobs ready to be replaced by AI.

We are obsessed and fixated with AI scoring higher and higher on IQ tests, or standardized tests that we have traditionally used to measure intelligence. Remember that every question on a standardized exam follows logic, and that logic can be documented. That is what textbooks and study guides do, after all. Is that all that makes us human? Because AGI is about artificially mimicking human capabilities.

Let us look at this from another perspective. One that AGI enthusiasts constantly “warn” us about. AI decides to kill humans.

Let us explore typical homicide reasons. When does a human choose to kill another human? What are some of the reasons, as per public databases? Suppose we translate gang war homicides into homicides driven by a turf war, which eventually translates into money, and exclude mass shootings, the primary reasons can be money, crimes of passion, anger, hate, fear, etc. What are these driven by? Emotions. Moral compass. Mass shootings are driven by hate, anger or mental health issues,

In a nutshell, all of the above are aspects that are elements of cognition. Cognition is the way we think, experience, and make decisions. As per Wikipedia:

“The mental action or process of acquiring knowledge and understanding through thought, experience, and the senses.”

Without us realizing it, we use cognition while performing many of our day-to-day tasks. Cognition allows us to make our own decisions based on our own interpretation, which can be good or bad. This applies to crimes like a human deciding to kill another human. There is no sane logic involved in such decisions. There is no logic that justifies one human killing another human. Such decisions can not be made based on a set of predefined logic OR culling/interpreting context from documented data.

So, how can an AI decide to kill humans? The only way it can, within the realm of current capabilities or the way we train our AI algorithms, is if it hallucinates how we have seen almost all public LLMs do a few times. All those hallucinations can be tied to errors, training faults, or limitations. You will never deploy AI for something even as mundane as placing an order for a pencil box until you are convinced it is making the right decision.

One path being suggested is that if we build a massive infrastructure, we can train an AI so extensively that it is almost close to having “read” everything possible out there so that it can avoid hallucinations and errors. Theoretically, that is doable. But remember that the underlying method does not change. The algorithm still remains a web of massive calculations in the backend. It has no cognition.

Without that cognition, the AI can decide to eliminate humanity only if it somehow hallucinates in its training that it is the right course in specific scenarios. As I consistently highlight, that will be a training issue where AI does not have enough training data to respond accurately to particular scenarios or prompts. The AI will not make any decisions based on cognition. It is cognition-less. An AI can detect emotional expressions on a human face that result from our cognition, but it does not have any of its own. It can not decide to eliminate humanity out of fear, concern, or anger. The only way it can is when humans design it in a flawed way.

The fact is, unless we are 200% confident that AI is error-proof, we will never deploy it in solutions that can trigger such decisions.

So, when it comes to cognition, with the current approach, AI will never become equivalent to humans. So, if the definition of AGI is to mimic every capability an average human has, it will never happen.

But…..

There is a way to build some level of cognition in AI, though not within the realm of the current LLM approaches. I am pretty sure people much smarter than me already are aware of this. But that will also not be equivalent to the cognition, and the emotions driven by cognition that we feel in our day-to-day lives.

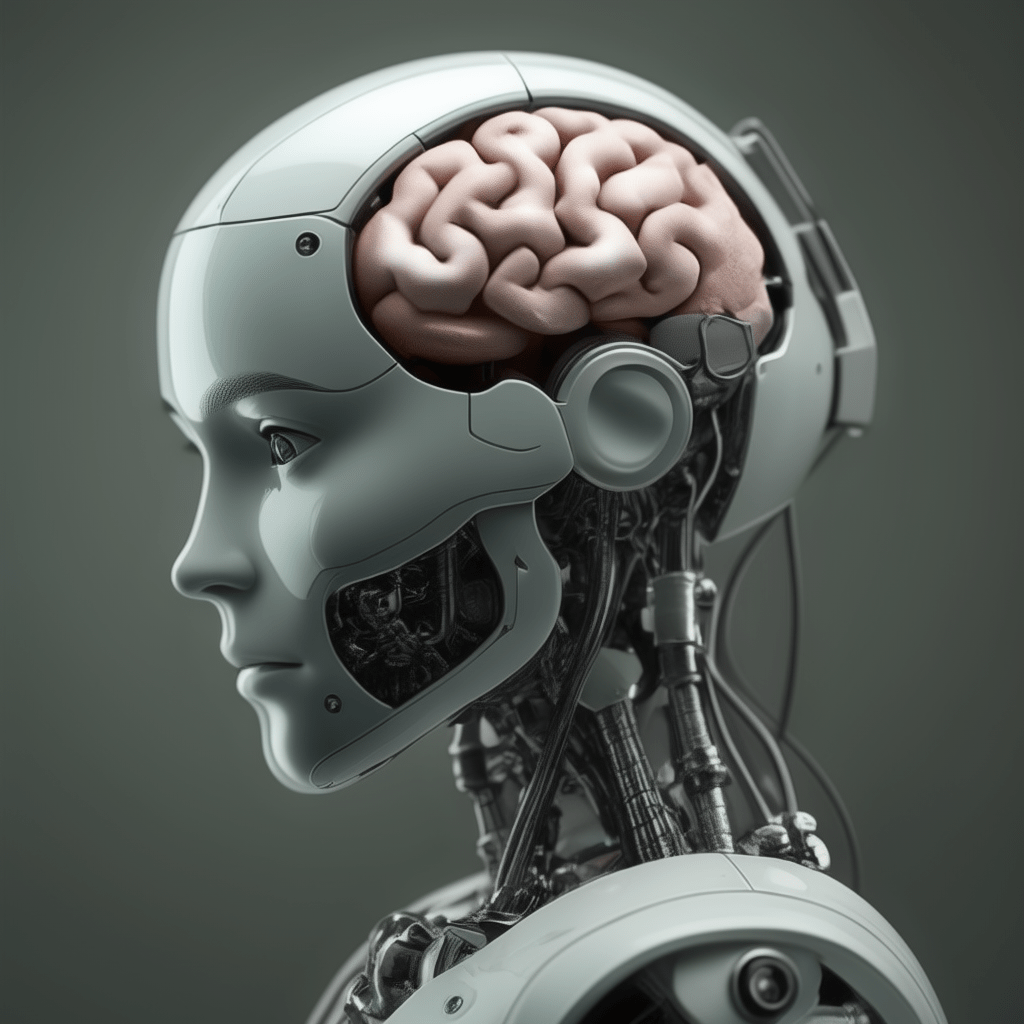

Some of us often underestimate our brains, but the fact is, it is impossible to artificially replicate every capability that they have.

However, AI can kill us by suggesting that we eat glue 😄 No matter what level of complicated jargon you read, suggesting that the AI is not “thinking”, the fact is, it is still calculating.